Blog

Popular Blog

Data Science

Project Management

Digital Marketing

Robotic Process Automation

Agile

Data Analytics

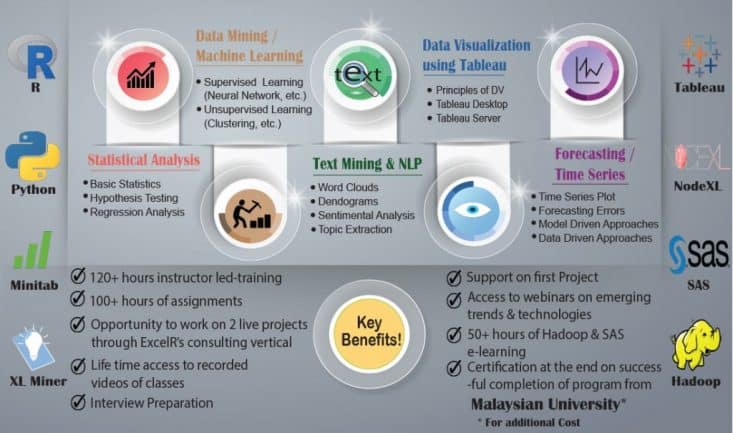

Business Analytics